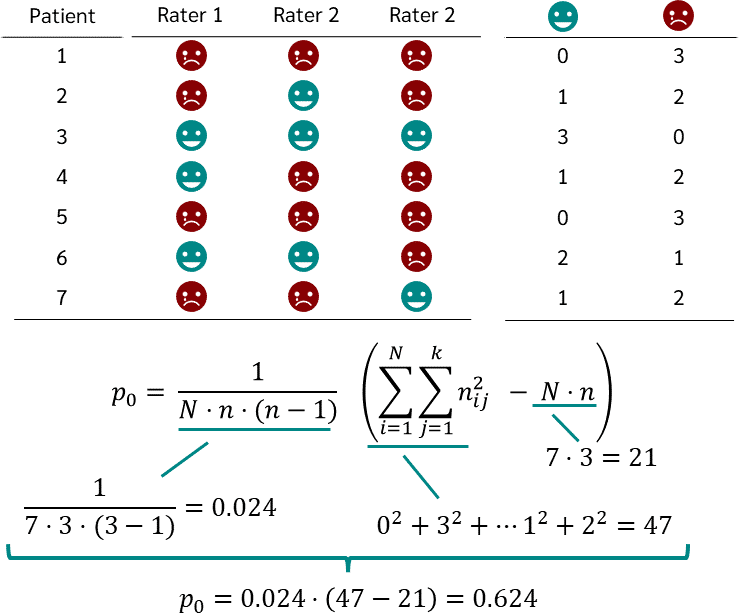

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

![PDF] Sample size determination and power analysis for modified Cohen's kappa statistic | Semantic Scholar PDF] Sample size determination and power analysis for modified Cohen's kappa statistic | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e51bec1b939791a897b3153430133087c0d30eb1/10-Table3-1.png)

PDF] Sample size determination and power analysis for modified Cohen's kappa statistic | Semantic Scholar

Testing the normal approximation and minimal sample size requirements of weighted kappa when the number of categories is large

Table 3 from Sample Size Requirements for Interval Estimation of the Kappa Statistic for Interobserver Agreement Studies with a Binary Outcome and Multiple Raters | Semantic Scholar

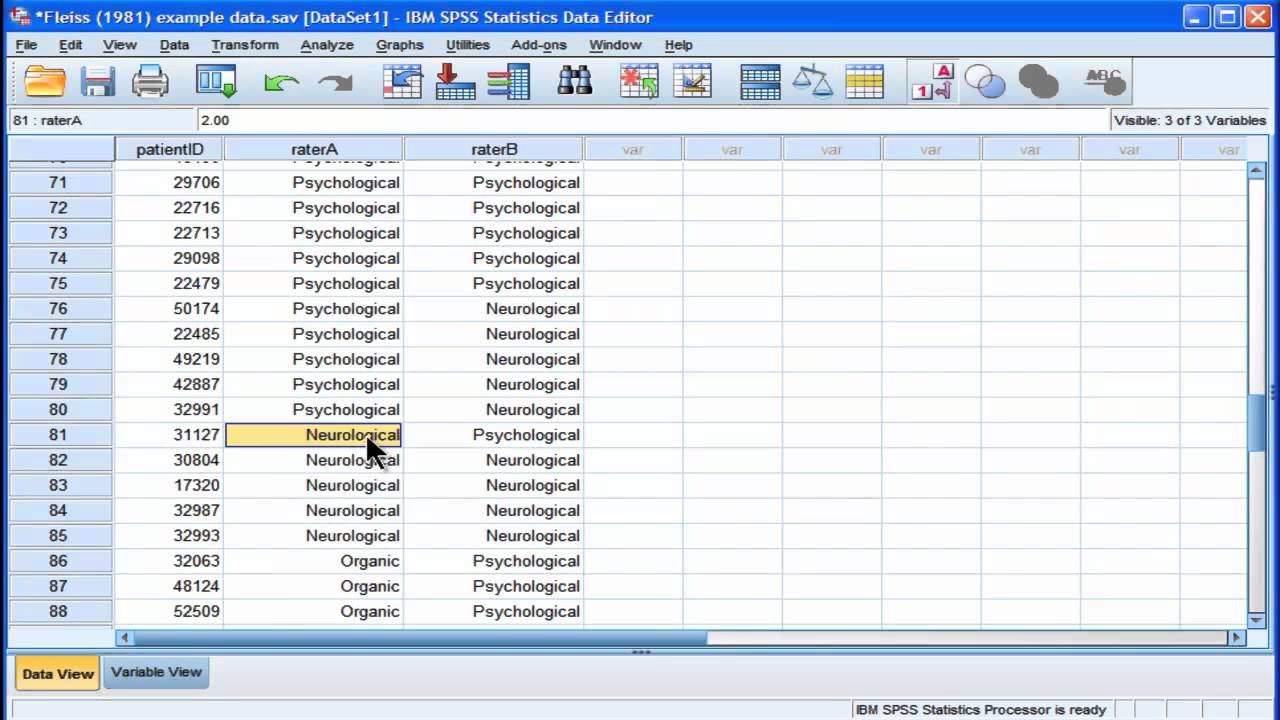

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/maxresdefault.jpg)

![PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar PDF] Sample-size calculations for Cohen's kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/c59f58e51e97eaa055b450d9f71cac402d7e45ad/4-Table2-1.png)